When reporting against an OLTP system, in many ways, OBIEE works like an ETL tool, transforming the source system data and presenting it as a star schema. After years of writing ETL code, if there’s one thing I hate to be without, it’s regular expressions. So, when working on a project to map an OLTP source system to a logical model in OBIEE, I came across the following issue, and knew immediately that I would need regular expressions to solve it.

The CONTACT table in the source system had two columns storing the name: FIRST_NAME and LAST_NAME. There were two different processes that wrote entries in the CONTACT table… and one of them was faulty, writing the entire name in the FIRST_NAME column, though it was never corrected. So the following is a decent representation of what the data looked like:

SQL> create table CONTACT (contact_id NUMBER, first_name varchar2(50), last_name varchar2(50));

Table created.

Elapsed: 00:00:00.02

SQL> insert into CONTACT values (1, 'Bryson, Stewart W.', NULL);

1 row created.

Elapsed: 00:00:00.02

SQL> insert into CONTACT values (2, 'Mead, Jon', NULL);

1 row created.

Elapsed: 00:00:00.00

SQL> insert into CONTACT values (3, 'Mark', 'Rittman');

1 row created.

Elapsed: 00:00:00.00

SQL> select * from CONTACT;

CONTACT_ID | FIRST_NAME | LAST_NAME

---------- | -------------------- | --------------------

1 | Bryson, Stewart W. |

2 | Mead, Jon |

3 | Mark | Rittman

3 rows selected.

Elapsed: 00:00:00.00

SQL>

I needed to map the BMM such that I could return FIRST_NAME and LAST_NAME regardless of whether the entire name was concatenated into the FIRST_NAME, or whether it was correctly distributed across both columns. Additionally, the fact that the middle initial needed to be included with FIRST_NAME also proved a little troubling. At the end of the day, this is what I came up with in SQL:

SQL> SELECT CASE 2 WHEN last_name IS null THEN trim(regexp_substr(first_name,'[^,]+$')) 3 ELSE first_name 4 END first_name, 5 CASE 6 WHEN last_name IS null THEN regexp_substr(first_name,'^([^,]+)') 7 ELSE last_name 8 END last_name 9 FROM contact 10 / FIRST_NAME | LAST_NAME -------------------- | -------------------- Stewart W. | Bryson Jon | Mead Mark | Rittman 3 rows selected. Elapsed: 00:00:00.01 SQL>

To explain a bit, I’ll start with how I extracted the first name information from the FIRST_NAME column. I needed to start at the comma and then get the entire string until the end of the column. So I used the [^] structure in regular expressions, which basically says, return anything EXCEPT the character between the brackets and after the carrot (^). The plus (+) instructs the RegEx engine to return one or more instances of the previous structure. And at the end, the dollar sign ($) dictates that the entire string must run to the end of the column value. So taken all together, [^,]+$ instructs the RegEx engine to:

“Start at the first character that is not a comma, and return all non-comma characters all the way to the end of the column value.”

The only kludge introduced here is that the first non-comma character was actually a space, and to remove it, I simply used a TRIM. If some one has a way to do this without a TRIM, then I’d be glad to hear it.

To extract the last name information from the FIRST_NAME column, I used a similar mechanism, except that, instead of using the dollar sign ($) at the end, I put the carrot (^) at the beginning. It’s the same concept: it means that the expression returned has to begin at the start of the column value. So, the ^([^,]+ instructs the RegEx engine to:

“Start at the beginning of the column value, and return the whole string until a comma is encountered.”

Easy enough.

Now I want OBIEE to accept this SQL in the BMM. The only issue here is that OBIEE does not support regular expressions in it’s SQL language, so I have to use the EVALAUTE command to pass Oracle’s regular expression syntax back through to the database. So I’ll demonstrate how to do this, but first I’ll need to create a fact table to join to the CONTACT table in OBIEE.

SQL> CREATE TABLE activity (contact_id number, activity_date date, num_calls NUMBER);

Table created.

Elapsed: 00:00:00.04

SQL> INSERT INTO activity VALUES (1, SYSDATE-2, 10);

1 row created.

Elapsed: 00:00:00.00

SQL> INSERT INTO activity VALUES (2, SYSDATE-1, 20);

1 row created.

Elapsed: 00:00:00.00

SQL> INSERT INTO activity VALUES (3, sysdate, 30);

1 row created.

Elapsed: 00:00:00.00

SQL>

SQL> SELECT * FROM activity;

CONTACT_ID | ACTIVITY_DATE | NUM_CALLS

---------- | ---------------------- | ----------

1 | 12/16/2009 10:21:34 AM | 10

2 | 12/17/2009 10:21:34 AM | 20

3 | 12/18/2009 10:21:34 AM | 30

3 rows selected.

Elapsed: 00:00:00.01

SQL>

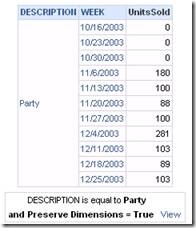

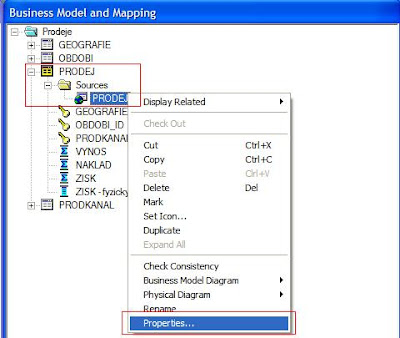

To demonstrate the whole process in OBIEE, I first built the BMM to bring the data in how it is from the database:

To generate the correct data, using the regular expressions developed above, here is how I mapped the First Name attribute:

CASE

WHEN "bidw".""."STEWART"."CONTACT"."LAST_NAME" IS NULL

THEN Trim(BOTH ' ' FROM Evaluate('regexp_substr(%1,''[^,]+$'')', "bidw".""."STEWART"."CONTACT"."FIRST_NAME" ))

ELSE

"bidw".""."STEWART"."CONTACT"."FIRST_NAME"

END

And here is how I mapped the Last Name attribute:

CASE

WHEN "bidw".""."STEWART"."CONTACT"."LAST_NAME" IS NULL

THEN Evaluate('regexp_substr(%1,''^[^,]+'')', "bidw".""."STEWART"."CONTACT"."FIRST_NAME" )

ELSE

"bidw".""."STEWART"."CONTACT"."LAST_NAME"

END

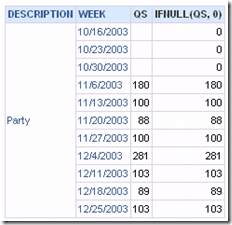

And finally… the results: